Caristix Workgroup is designed to help interface analysts and engineers to manage the entire interfacing lifecycle. Workgroup provides the following features and functionality:

You can add documents (Word, Excel, PDF documents, etc.) to the Library. You can do so using one of the following ways:

Documents will be uploaded to the library and made available from Workgroup.

Documents and folders will be uploaded to the library and made available from Workgroup.

Document(s) will not be uploaded to the server and will only be available from your computer. Other user from the same library will see the shortcut, but won’t be able to open it. This will act as a normal shortcut in Windows.

There are actions that can be performed on the library via the Main Menu’s Action section, the right-click contextual menu (right-click a node or blank space), and the Gear icon beside the search bar.

When there is no document highlighted, the available actions are:

When a document is selected, the available actions depends on the document type. Common actions are:

The foundation of Caristix software is profiles. Profiles are another word for interface specifications, specs, or conformance profiles. They are a way to capture the data formats and code sets you need for exchanging information between systems. Profiles provide a list of message types (or trigger events), segments, fields, components, sub-components, data types, and data tables that are specific to a system. The profiles you develop with Caristix software can be used to:

You can either build a spec manually by reading sample HL7 messages over the course of a few days, or you can use Caristix software to automatically build one for you, using the reverse-engineering functionality in our software. Learn about the tasks related to building, scoping, and updating specifications as follows:

In Caristix software, profiles serve as interface documentation. The Library is a repository for all interface specifications: HL7 reference specifications (which come built into Caristix Workgroup software), product specifications, and specifications for the customized mapping and configuration that must occur for working interfaces as well as any other type of documentation file.

There are several ways to create a profile or specification:

This method is useful when you have a large volume of message types and trigger events to document, based on a specific HL7 version. If your specification is more limited, consider building a profile from individual message elements.

You will need to edit the profile to reflect the specification. Go to Editing a Profile to learn more.

You can also build a profile from individual message elements. This method is useful when the specification you are building is limited to a small subset of an HL7 version and when customization is extensive

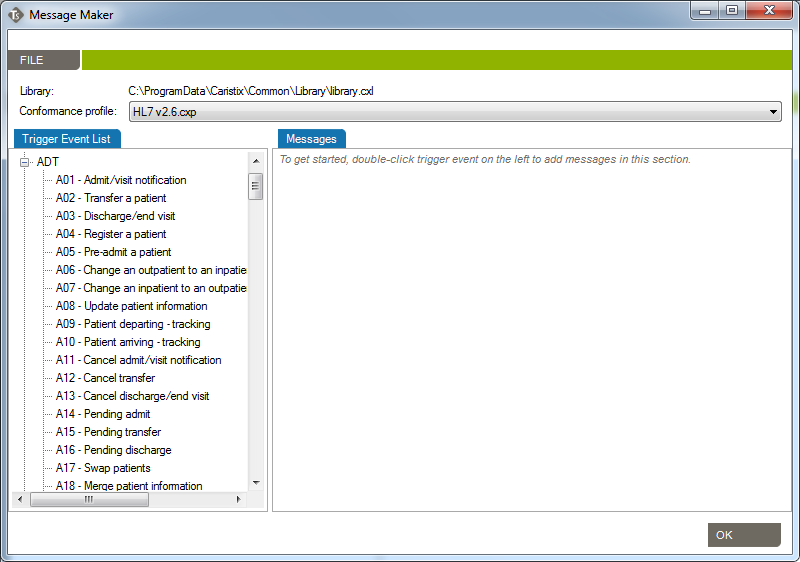

You can add a trigger event or message type from one of the HL7 references or from a previously built profile.

In the Documents pane, double-click on the profile you want to build out.

In the Profile Explorer, right-click on the first node.

| Mode | Why Choose This Option | Action | Example |

| Import only missing definitions | Choose this if you only want to import element that don’t already exists in your profile | This will import definitions that are not present in the current profile and all referenced elements. | Your profile doesn’t have a ADT_A01 trigger event you’d like to add from HL7 v2.6. |

| Replace all definitions | Choose this if you need to replace all existing definitions with the imported definitions. | Replace existing elements by imported elements. This means that you’ll overwrite current definitions. The segment definition will change to the imported definition. | Your profile has an ADT_A08 definition that would like to replace by the one from v2.6. |

| Blend definitions | Choose this if you need to import a definition from another profile, but also need to keep all definitions from both profiles. | This will import all selected and referenced definitions and will duplicated all elements that are different. | Your profile has a custom ADT_AZZ definition from one source system. A second source system uses a different definition. You need to code an interface for both definitions. |

You can add an event or message without segments, fields, associated data types, or tables. These elements must be defined later. Use this method when the event to be specified has not been formally defined in the HL7 standard.

In the Document pane, double-click on the profile you want to edit. Right-click on the first node and select Add, Trigger Event. A new trigger event is added.

Rename the trigger event and add a description.

Once you have added trigger events, you can edit segments, fields, and data types within your profile. See Editing a Profile for more information.

The Reverse Engineering tool enables you to create a profile from an HL7 log (or HL7 message file). A profile (also known as a specification or message definition) documents the message structure and content, including the use of Z-segments and custom data types.

To open the Reverse-Engineering tool, click PROFILE v2, New, With Reverse-engineerer Wizard... The tool opens to Choose Log Files.

Then click Next to go to the next step. You can also load messages by querying a database.

To begin building a profile based on the messages you just loaded, the software needs an established profile to compare against. Select a profile that most closely matches your messages, then click Next. (Note: the software picks up on the HL7 version specified in your messages, but you are free to choose another reference).

The messages load.

(If they load too slowly, you can click the Cancel button in the Loading dialog box and only messages that have loaded thus far will appear.)

If there are files, events, segments, or other data elements you don’t require for the profile, filter them out in this step (read Filter an HL7 Log to learn more), then click Next to go to the next step. To reverse-engineer all messages without filtering, simply click Next.

This step is optional. The software will detect all sending and receiving applications present in the messages. If only one combination is detected, this step is skipped.

You have two options here. You can either generate a single profile combining all applications represented in the message file, or you can create separate profiles for each sending and receiving application combination. The second option offers you the possibility to choose specific combinations; it will also run the next 5 steps consecutively for all selected combinations.

The software sets up the reference profile and messages you selected. Once the processing is complete, simply click Next to continue, as specified on-screen.

Choose between Basic and Advanced field analysis.

This choice lets you analyze fields and data values and assign known data types. If Conformance finds data values and fields that do not match known data types, an new data type will be assigned. You can manually edit the data types later, when the reverse-engineering profile appears in the Library.

Select Basic Field Analysis if:

you are not sure that data types are important to your analysis.

you want to speed up your analysis and focus on identifying details in other message elements such as events and segments.

This choice lets you fully analyze fields and data values. Data values and fields that do not match expected data types will be flagged. You will have the opportunity to either create custom data types to handle non-HL7-compliant data, or assign an existing data type.

Select Advanced Field Analysis if:

you need complete data type analysis for your interfacing project

you are comfortable creating new data types for further analysis

This section allows you to set more specific options for data and field analysis.

Once you make your selection in Step 2, click Next.

The software reads through the messages and segments to begin building the profile. When processing is complete, click Next to continue, as specified on-screen.

This step creates the field structure in your profile, assigns data values to user tables, and associates data types to fields and values.

If you selected Basic Field Analysis in Step 3, Basic Mode appears in Step 4. Workgroup processes the fields and data types automatically. When the processing is finished, click Next.

If you selected Advanced Field Analysis in Step 3, Advanced Mode appears in Step 4. Workgroup analyzes each segment for data values and fields that do not match expected data types. In other words, the software automatically performs a conformance check. When non-compliant elements are flagged, the software automatically suggests a data type and field structure. You can accept the suggestion, assign another data type, or create a new data type to handle the non-compliant values and fields.

Edit as needed to reflect maximum field length

Specify usage.

This tab provides a list of the data values that were flagged as non-compliant, as well as how many times they were found in the messages.

When processing is complete, click Next to continue.

This step will collect analyze the message flows in your logs (if you select this option at step 2). These message flows will be stored into the profile and available for future uses, to generate test messages for example.

This is the final step in the Reverse-Engineering wizard. Specify a folder to save the profile to or browse your computer to save it locally. Name the profile. And provide a description if needed. Click Save to close the Reverse-Engineering wizard and go to the Documents pane. (If multiple Sending and Receiving Applications were selected, the wizard will start a new analysis on Step 1)

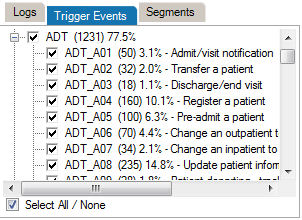

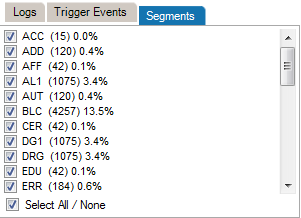

When the reverse engineering wizard is run, you have the option of filtering out unneeded data values, trigger events, and segments. These data elements may not be needed for the profile you are creating, despite their presence in the HL7 message log.

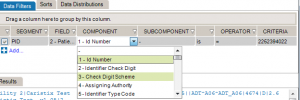

Data filters let you set up queries to find messages containing specific data. Queries can filter on specific message building blocks: segments, fields, components, and subcomponents.

| Operator | Action |

| is | Includes messages that contain this exact data |

| is not | Excludes messages that contain this data |

| = < > =< >= | Filters on numeric values |

| like | Covers messages that include this data somewhere in the element (ex: 42 in 4342, 3421, 4286) |

| present | Looks for presence of a message element (such as segment, field, etc.) |

| empty | Looks for unpopulated message elements (such as a segment, field, etc.) |

| in | Filter on multiple data values in a message element rather than a single value |

| regex syntax | .NET regular expression syntax, equivalent to wildcard expressions |

The data sorting functionality lets you set up sort queries on data values.

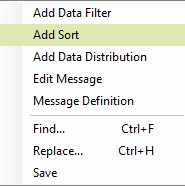

You can use an existing Search and Filter Rules file or save newly created rules throughout the Reverse Engineering filtering step. To do so, right-click anywhere in the Data Filters, Sorts or Data Distributions section.

In order to use a profile created in another installation of the application, you will need to import the file.

After creating a profile, you will need to edit it. There are three main editing tasks: editing existing message elements, adding new elements, and deleting elements you no longer need.

There are two ways to add segments, depending on your needs. You can either add a segment defined in the profile you’re working on, or add one from a different profile.

Start here:

To create a new Segment definition, click on Add Segment, New. A new Segment definition appears at the bottom of the list.

You can also create a copy of an existing Segment definition by right-clicking on the source definition, select Copy and then right-click again and select Paste. A new Segment definition appears at the bottom of the list.

| Mode | Why Choose This Option | Action | Example |

| Import only missing definitions | Choose this if you only want to import element that don’t already exist in your profile. | This will import definitions that are not present in the current profile and all referenced elements. | Your profile doesn’t have a PID segment you’d like to add from HL7 v2.6. |

| Replace all definitions | Choose this if you need to replace all existing definitions with the imported definitions. | Replace existing elements with imported elements. This means that you’ll overwrite current definitions. The segment definition will change to the imported definition. | Your profile has an XPN definition that you would like to replace with the one from v2.6. |

| Blend definitions | Choose this if you need to import a definition from another profile, but also need to keep all definitions from both profiles. | This will import all selected and referenced definitions and will duplicate all elements that are different. | Your profile has a custom ZOD definition from one source system. A second source system uses a different definition. You need to code an interface for both definitions. |

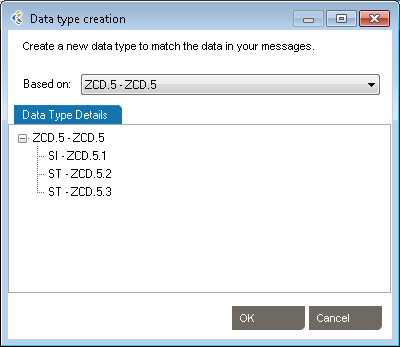

This is useful when you need to add a new data type for a Z-segment or a custom field.

| Mode | Why Choose This Option | Action | Example |

| Import only missing definitions | Choose this if you only want to import elements that don’t already exist in your profile. | This will import definitions that are not present in the current profile and all referenced elements. | Your profile doesn’t have a TS (time-stamp) data type you’d like to add from HL7 v2.6. |

| Replace all definitions | Choose this if you need to replace all existing definitions with the imported definitions. | Replace existing elements with imported elements. This means that you’ll overwrite current definitions. The segment definition will change to the imported definition. | Your profile has an HD definition that would like to replace by the one from v2.6. |

| Blend definitions | Choose this if you need to import a definition from another profile, but also keep all definitions from both profiles. | This will import all selected and referenced definitions and will duplicated all elements that are different. | Your profile has a custom TS definition from one source system. A second source system uses a different definition. You need to code an interface for both definitions. |

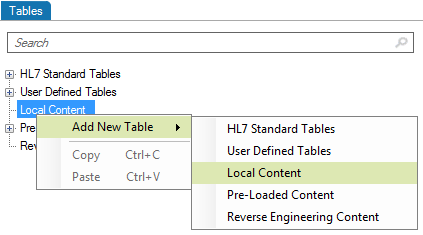

This is useful when you need to add a new table for a Z-segment.

Edit segments and fields, so you capture the data elements pertinent to your specification. Due to the nature of the HL7 standard (HL7 is object-oriented), any changes made are global changes and affect the entire profile.

There are two ways to access segments and fields:

Click the “+” sign to expand a message, then edit the segment.

Right-click a message, and select Segment... A separate window displays the Segment Library. Expand the segment you wish to edit by clicking the plus sign.

To edit each field or individual component, click on the title. Under the Configuration tab, make the changes to each field attribute.

This is useful when you want to reduce the profile to relevant trigger events.

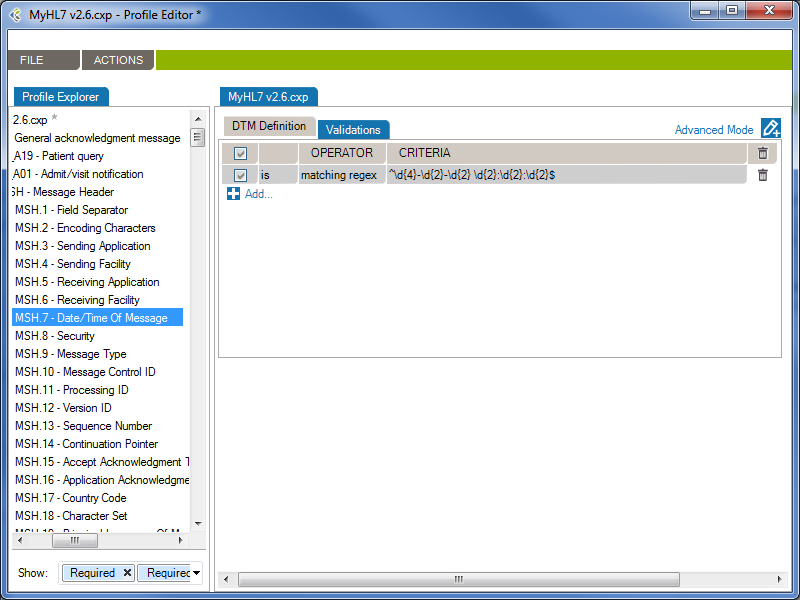

From the Validations tab, you can configure a set of rules that validate message content (data) is conform.

In the following example, the rule will validate (and raise conformance gaps) if the MSH.7 of a message does not conform to the format “yyyy-mm-dd hh:MM:ss”

Operator | Action |

| is | Valid that contain this data |

| is not | Valid that does not contain this data |

| = | Valid with an exact match to this data (this is like putting quotation marks around a search engine query) |

| < | Less than. Covers validating on numeric values. |

| <= | Less than or equal to. Covers validating on numeric values. |

| > | Greater than. Covers validating on numeric values. |

| >= | Greater than or equal to. Covers validating on numeric values. |

| like | Valid if includes this data. Covers validating on numeric values. |

| present | Looks for the presence of a particular message building block (such as a field, component, or sub-component) |

| empty | Looks for an unpopulated message building block (such as a field, component, or sub-component) |

| in | Builds a filter on multiple data values in a message element rather than just one value. |

| in table | Looks if the data is in a specific table of the Profile. |

| matching regex | Use .NET regular expression syntax to build validations. To be used by advanced users with programming backgrounds. Learn more about regular expressions here:

This is also a quite good utility to hep you create complex regular expressions: |

The JavaScript engine allows you to create custom validation rules, which will be used during the conformance validation of your HL7 messages.

You can add custom javascript validation rules at the profile, trigger-event, segment and data-type levels. The javascript rules will be evaluated during the HL7 message validation, depending on the element of the message being validated.

Profile: Validation rules added at the Profile level will be evaluated first and only once per message.

Trigger-Event: Validation rules added at the Trigger-Event level will be evaluated only once per message and will only be evaluated for matching messages. The MSH.9 – Message Type is used to match messages and trigger-events.

Segment: Validation rules added at the Segment level will be evaluated for each instances of the segment in a message.

Data-Type: Validation rules added at the Data-Type level will be evaluated for each instances of the data-type in a message.

By using the callback() method, you can notify the message validator when an error has occurred. You can provide callback() with an error message as a string, or with a ValidationError object.

During HL7 message validation, the JavaScript engine context is updated, allowing you to access the current element being validated. The context has the following properties you can refer to:

The ValidationError allows you to return a customized validation error in the callback method. The ValidationError object exposes the following properties and methods:

Returns a new, empty ValidationError.

var validationError = new ValidationError();

callback(validationError);

// Returns a new ValidationError object in the callback method.

A summary of the error.

var validationError = new ValidationError();

validationError.summary = 'Invalid Medical Number';

// The validation error's summary should be 'Invalid Medical Number'

A detailed description of the error.

var validationError = new ValidationError();

validationError.description = 'PID.3 does not contain a valid MR - Medical Number for the patient';

// The validation error's description should be 'PID.3 does not contain a valid MR - Medical Number for

// the patient'

Returns the JSON string value of the ValidationError.

var validationError = new ValidationError();

validationError.description = 'PID.3 does not contain a valid MR - Medical Number for the patient';

var validationErrorString = validationError.toString();

// validationErrorString should be '{ "description":"PID.3 does not contain a valid MR - Medical Number for the patient"}'

When you publish a profile report to Word, you may need to edit descriptions in Word then save those edits to the corresponding profile. This is done using the Synchronize function.

The synchronization feature uses internal Word document markups so it can relate any change to the right profile section. When updating the document, make sure the document structure is preserved. It is suggested that you experiment with this functionality before starting document updates on a large scale. For instance:

Extra Content enables you build profiles that include more than the official HL7 content.

Basic profiles, without Extra Content, enable you to define message-related structure and content through trigger events, segments, fields, tables, etc. In turn, each of those elements are described through attributes such as Sequence, Name, Optionality, etc. software include set of attributes describing profiles and profile entities. Extra Content lets you add new elements and new attributes.

For instance, you may want to add a change history table to a profile, in order to track changes over time. Or you might want to add an extra column to store source descriptions for code set values. Both of these can be added using Extra Content. This content will be displayed as part of the profile, exactly the same way standard HL7-related elements and attributes are displayed.

An Extra Content Template is a set of extra elements and attributes that you bundle together.

The Extra Content template itself doesn’t contain any data. Instead it defines the containers (or placeholders) for your data. An Extra Content Template represents the structure of the content you add to a profile. You can set up a Template and use it across one or more profiles. Once a profile is associated with an Extra Content Template, you can enrich the profile definition by populating the Extra Content areas.

Please refer to the following sections for more information:

Manage Extra Content Templates through the Extra Content Library. To access the Extra Content Library:

From the Extra Content Library (Manage Extra Content Templates window), you can:

To create a new Extra Content Template, open the “Manage Extra Content Template” window.

Build your templates by adding Extra Content to profile sections as follows:

Add text, images, and grids to the Profile description area.

Once you go back to the profile, you can enter text in the Profile description area.

Once you go back to the profile, you can add an image. To do so, click the Browse… button and select the image you want to include.

Once you go back to the profile, you can add data to your new grid. To do so, click the Add… button to create new grid rows.

You can add Extra Content embedded next to the HL7-defined profile elements. This is a quick way to display needed profile data such additional descriptions, items to validate, business and mapping rules, etc.

You are now ready to populate the new column with text:

List columns are useful when you’re able to define valid values for the column — in other words, a picklist.

Next, populate the profile:

The new column is now added to the table content. You can pick values from the picklist to assign values to the cell.

To delete an Extra Content Template, open the “Manage Extra Content Template” window.

Note: Extra Content Templates are linked to the data within profiles. If you delete an Extra Content Template, all associated data within your profiles will be deleted as well.

You can modify templates at any time so you can continue to enrich your profiles, as follows:

Note: If you delete an Extra Content Template element, this component will be deleted in every profile associated with this template. Learn more about deleting Extra Content Templates.

To rename an Extra Content Template, open the “Manage Extra Content Template” window.

To copy/duplicate an Extra Content Template, open the “Manage Extra Content Template” window.

Copying an Extra Content Template can be quite useful when you want to modify an existing template without impacting all associated profiles. Create a new but similar template, and then migrate profiles to the new template one by one.

Copying is also a way to “backup” a template before modifying it.

Link an Extra Content Template to a profile as follows :

You can now add Extra Content to your profile based on the newly assigned template.

Unlink an Extra Content Template from a profile as follows:

Workgroup automatically manages Extra Content Templates when you import profiles. If the template is not already available, it will be imported along with the profile.

Extra Content can be included in the Gap Analysis process.

Ensure that both profiles are using the same Extra Content Template. Extra content will automatically appear in the list of attributes available for Gap Analysis. Learn about Gap Analysis attributes.

Generate profile reports of an interface specification:

Note: You can also synch your profile. This feature allows a user to update the Word document directly and synchronize the profile library with the upload document content.

The Attributes tab describes an element’s attributes.

| RESTRICTED VALUES: | Optional. Restrictions are used to define acceptable values for XML attributes. |

From the Actions menu, you’ll have access to:

Complex types describe the permitted content of an element, including its element and text children and its attributes. A complex type definition consists of a set of attribute uses and a content model. The types of content model include element-only content, in which no text may appear (other than whitespace, or text enclosed by a child element); simple content, in which text is allowed but child elements are not; empty content, in which neither text nor child elements are allowed; and mixed content, which permits both elements and text to appear. A complex type can be derived from another complex type by restriction (disallowing some elements, attributes, or values that the base type permits) or by extension (allowing additional attributes and elements to appear).

XML Type Editor in Workgroup works as follows:

The Types tab describes the structure of a type. You can add the following elements to the structure of a type.

| Element: | A complex element is an XML element that contains other elements and/or attributes. |

| Element Group: | The group element is used to define a group of elements to be used in complex type definitions. |

| Sequence: | The sequence element specifies that the child elements must appear in a sequence. Each child element can occur from 0 to any number of times. |

| Choice: | XML Schema choice element allows only one of the elements contained in the declaration to be present within the containing element. |

The Definition tab describes an element’s properties.

| Name: | Specifies a name for the element. This attribute is required if the parent element is the schema element. |

| Type: | Optional. Specifies either the name of a built-in data type, or the name of a simpleType or complexType element. |

| Min Occurs: | Optional. Specifies the minimum number of times this element can occur in the parent element. |

| Min Occurs: | The value can be any number >= 0. Default value is 1. This attribute cannot be used if the parent element . |

| Default: | Optional. Specifies a default value for the element (can only be used if the element’s content is a simple type or text only). |

| Fixed: | Optional. Specifies a fixed value for the element (can only be used if the element’s content is a simple type or text only). |

| Description: | Optional. Describes the element in natural language. |

The Attributes tab describes an element’s attributes.

| SOURCE: | Specifies the attribute’s owner. |

| ID: | Specifies a unique ID for the attribute. |

| TYPE: | Optional. Specifies a built-in data type or a simple type. The type attribute can only be present when the content does not contain a simpleType element. |

| USE: | Optional. Specifies how the attribute is used. Can be one of the following values:

|

| DEFAULT: | Optional. Specifies a default value for the attribute. Default and fixed attributes cannot both be present. |

| FIXED: | Optional. Specifies a fixed value for the attribute. Default and fixed attributes cannot both be present. |

| DESCRIPTION: | Optional. Describes the attribute in a natural language. |

| RESTRICTED VALUES: | Optional. Restrictions are used to define acceptable values for XML attributes. |

Schematron is a rule-based validation language for making assertions about the presence or absence of patterns in XML trees. It is a structural schema language expressed in XML using a small number of elements and XPath.

Schematron is capable of expressing constraints in ways that other XML schema languages like XML Schema and DTD cannot. For example, it can require that the content of an element be controlled by one of its siblings. Or it can request or require that the root element, regardless of what element that is, must have specific attributes. Schematron can also specify required relationships between multiple XML files.

Constraints and content rules may be associated with “plain-English” validation error messages, allowing translation of numeric Schematron error codes into meaningful user error messages.

XML Schematron Editor in Workgroup works as follows:

The Schematron schema language differs from most other XML schema languages in that it is a rule-based language that uses path expressions instead of grammars. This means that instead of creating a grammar for an XML document, a Schematron schema makes assertions that are applied to a specific context within the document. If the assertion fails, a diagnostic message that is supplied by the author of the schema can be displayed.

One advantages of a rule-based approach is that in many cases modifying the wanted constraint written in plain English can easily create the Schematron rules. For example, a simple content model can be written like this: “The Person element should in the XML instance document have an attribute Title and contain the elements Name and Gender in that order. If the value of the Title attribute is ‘Mr’ the value of the Gender element must be ‘Male’.”

In this sentence the context in which the assertions should be applied is clearly stated as the Person element while there are four different assertions:

Person) should have an attribute TitleName and GenderName should appear before the child element GenderTitle has the value ‘Mr’, the element Gender must have the value ‘Male’In order to implement the path expressions used in the rules in Schematron, XPath is used with various extensions provided by XSLT.

It has already been mentioned that Schematron makes various assertions based on a specific context in a document. Both the assertions and the context make up two of the four layers in Schematron’s fixed four-layer hierarchy:

The bottom layer in the hierarchy is the assertions, which are used to specify the constraints that should be checked within a specific context of the XML instance document. In a Schematron schema, the typical element used to define assertions is assert. The assert element has a test attribute, which is an XSLT pattern. In the preceding example, there was four assertions made on the document in order to specify the content model, namely:

Person) should have an attribute TitleName and GenderName should appear before the child element GenderTitle has the value ‘Mr’, the element Gender must have the value ‘Male’Written using Schematron assertions this would be expressed as

| Type | Test | Text |

|---|---|---|

| Assert | @Title | The element Person must have a Title attribute. |

| Assert | count(*) = 2 and count(Name) = 1 and count(Gender)= 1 | The element Person should have the child elements Name and Gender. |

| Assert | *[1] = Name | The element Name must appear before element Gender. |

| Assert | (@Title = 'Mr' and Gender = 'Male') or @Title != 'Mr' | If the Title is “Mr” then the gender of the person must be “Male”. |

If you are familiar with XPath, these assertions are easy to understand, but even for people with limited experience using XPath they are rather straightforward. The first assertion simply tests for the occurrence of an attribute Title. The second assertion tests that the total number of children is equal to 2 and that there is one Name element and one Gender element. The third assertion tests that the first child element is Name, and the last assertion tests that if the person’s title is ‘Mr’, the gender of the person must be ‘Male’.

If the condition in the test attribute is not fulfilled, the content of the assertion element is displayed to the user.

Each of these assertions has a condition that is evaluated, but the assertion does not define where in the XML instance document this condition should be checked. For example, the first assertion tests for the occurrence of the attribute Title, but it is not specified on which element in the XML instance document this assertion is applied. The next layer in the hierarchy, the rules, specifies the location of the contexts of assertions.

The Assert type element is used to tag positive assertions about a document.

The Report type is used to tag negative assertions about a document.

The rules in Schematron are declared by using the rule element, which has a context attribute. The value of the context attribute must match an XPath Expression that is used to select one or more nodes in the document. Like the name suggests, the context attribute is used to specify the context in the XML instance document where the assertions should be applied. In the previous example the context was specified to be the Person element, and a Schematron rule with the Person element as context would simply be

| Id | Abstract | Context |

|---|---|---|

| False | Person |

Since the rules are used to group all assertions together that share the same context, the rules are designed so that the assertions are declared as children of the rule element. For the previous example, this means that the complete Schematron rule would be

The element Person must have a Title attribute.

The element Person should have the child elements Name and Gender.

The element Name must appear before element Age.

If the Title is "Mr" then the gender of the person must be "Male".

This means that all the assertions in the rule will be tested on every Person element in the XML instance document. If the context is not all the Person elements, it is easy to change the XPath location path to define a more restricted context. The value Database/Person, for example, sets the context to be all the Person elements that have the element Database as its parent.

The third layer in the Schematron hierarchy is the pattern, declared using the pattern element, which is used to group together different rules. The pattern element also has a name attribute that will be displayed in the output when the pattern is checked. For the preceding assertions, you could have two patterns: one for checking the structure and another for checking the co-occurrence constraint. Since patterns group different rules together, Schematron is designed so that rules are declared as children of the pattern element. This means that the previous example, using the two patterns, would look like

The element Person must have a Title attribute.

The element Person should have the child elements Name and Gender.

The element Name must appear before element Age.If the Title is "Mr" then the gender of the person must be "Male".

The name of the pattern will always be displayed in the output, regardless of whether the assertions fail or succeed. If the assertion fails, the output will also contain the content of the assertion element. However, there is also additional information displayed together with the assertion text to help you locate the source of the failed assertion. For example, if the co-occurrence constraint above was violated by having Title=’Mr’ and Gender=’Female’ then the following diagnostic would be generated by Schematron:

From pattern "Check structure":From pattern "Check co-occurrence constraints":

Assertion fails: "If the Title is "Mr" then the gender of the person must be "Male"."

at /Person[1] ...</>

The pattern names are always displayed, while the assertion text is only displayed when the assertion fails. The additional information starts with an XPath expression that shows the location of the context element in the instance document (in this case the first Person element) and then on a new line the start tag of the context element is displayed.

The assertion to test the co-occurrence constraint is not trivial, and in fact this rule could be written in a simpler way by using an XPath predicate when selecting the context. Instead of having the context set to all Person elements, the co-occurrence constraint can be simplified by only specifying the context to be all the Person elements that have the attribute Title=’Mr’. If the rule was specified using this technique, the co-occurrence constraint could be described like this

If the Title is "Mr" then the gender of the person must be "Male".

By moving some of the logic from the assertion to the specification of the context, the complexity of the rule has been decreased. This technique is often very useful when writing Schematron schemas.

*[Reference: www.xml.com/pub/a/2003/11/12/schematron.html]

Gap analysis is an HL7 interface scoping activity. When you build an HL7 interface, before jumping into the code, you need to understand what data you are going to play with. Most importantly, you need to understand the differences between source and destination systems at the messaging level. Before jumping into integration engine configuration, you need to know what to configure. Some of your questions are likely to include the following:

These are often challenging questions to answer.

The Gap Analysis functionality in Workgroup helps you identify these differences in a matter of a few seconds. Gap Analysis enables the following:

Determine gaps between 2 profiles or between a profile and a set of messages.

To list the differences (including differences in data structure and data content) between two profiles, follow this procedure:

Both profiles are loaded and you are taken to the Gap Analysis Workbench. You can now refine your comparison criteria. By default, data elements are not selected. Select the data elements (data structure and/or code sets) you want to compare. See Refine Gap Analysis Criteria for more information.

To list the differences (including differences in data structure and data content) between a profile and a set of HL7 messages (probably a few thousand), follow this procedure:

The profile and the HL7 messages are loaded and messages are analyzed. Depending on the number of messages you provided, the message analysis might take several minutes. A progress window tracks the process.

Once the loading process is complete, you are taken to the Gap Analysis Workbench. You can now refine your comparison criteria. By default, no data element is selected.

Gap Analysis Filters are used to remove irrelevant gaps. Each filter contains a set of preset options which will optimize the Gap Analysis detection process in order to show you only the “dangerous” gaps. A Gap Analysis Filter contains:

When you’ll start a new Gap Analysis, after selecting the profiles to compare, you will be asked to select a Gap Analysis Filter.

There are 4 pre-defined Filters that can be used.

This filter should be used when both systems exchange messages between each other.

This filter should be used when the first system sends messages to the second system.

This Filter should be used when the first system receives messages coming from the second system.

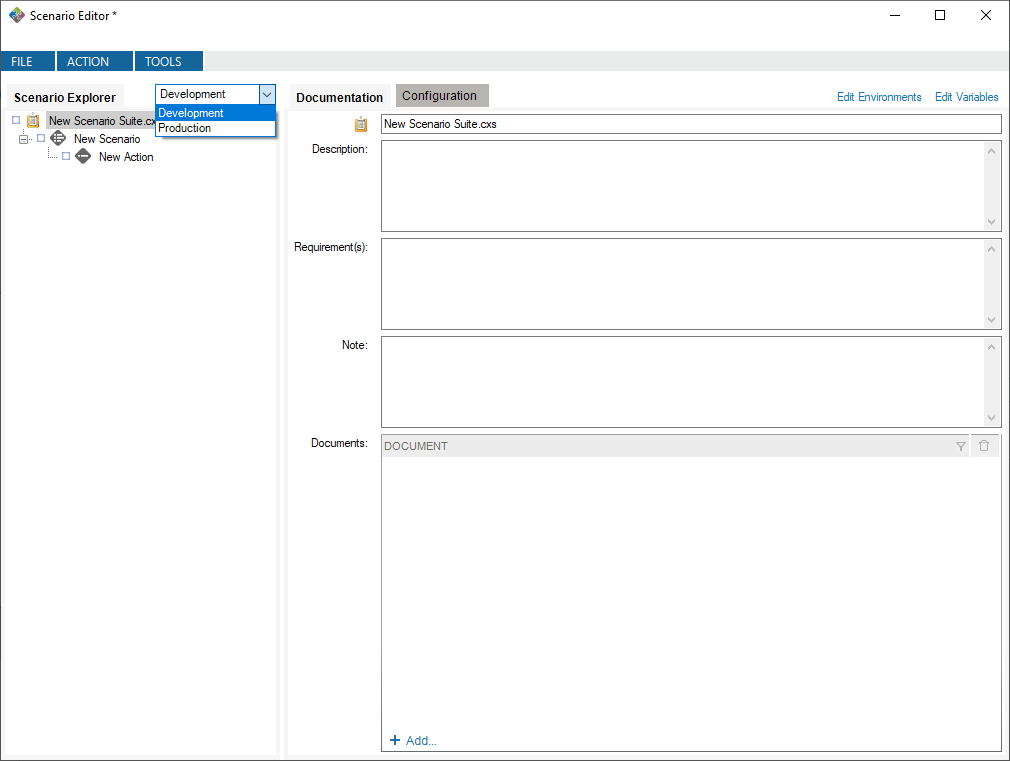

This filter should be used when you want to compare profiles representing the same system. Ex: Comparing reverse-engineered profiles coming from sample messages of your development and production environments.

While working with the Gap Analysis Workbench, you can edit computed attributes, options and difference filters. These can then be saved as a Custom Filter, which can be re-used for other Gap Analysis.

In the Gap Analysis Filter Selection window, you’ll be able to Select a “recent Gap Analysis Filter”, or Load a previously saved filter from your Library.

You’ll be able to set your choice of the default filter for your subsequent Gap Analysis and will not be asked to select a Gap Analysis filter again. Whenever you want, you may apply another Gap Analysis Filter in the Gap Analysis Workbench with “File > Gap Analysis Filter > Change Filter…“

Here is a quick look of the Gap Analysis Workbench.

1- Structure/Data Element: In this section, you’ll choose which element from your profiles will be compared.

2- Attributes: In this section, you’ll choose which attributes, from the previously selected elements, will be compared.

3 – Options: In this menu, you’ll be able to set options to improve the accuracy of the Gap Analysis comparison process.

4- Differences Filters: Differences Filters are used to show differences that match some specific criteria. In other words, discard the differences that aren’t relevant to your analysis.

5 – Gap Analysis Results: In this section, you will see all differences between the selected elements of your profiles, based on your Gap Analysis filter (Attribute, Options, Differences Filters).

Gap Analysis in Workgroup helps you focus on identifying and scoping differences upfront, instead spending time downstream on the validation of an overly generic interface. The gaps you find are actually a to-do list of items you need to handle when configuring the interface. Each to-do list item will need to be handled in one of several ways:

The to-do list aspect of Gap Analysis serves as starting point for your project task list documentation. Create a document automatically using the Export as Excel document functionality.

If a profile is created through reverse-engineering, you can view where the gaps in Optionality (for Segments and Fields) or Length (for Fields) come from by right-clicking on the cell and selecting View Examples… This will display all the messages where the gap occurred for these profiles.

By default, when you first see the Gap Analysis Workbench, nothing is selected. When you run a Gap Analysis, you select the data elements that matter to your interface.

The Gap Analysis Workbench is split in 2 sections:

At the top of the Criteria Section, you’ll see the list of the messages, segments, fields, and data tables that are contained in the 2 profiles (or profile and messages) you are comparing. Select an element to include it in the Gap Analysis.

*(Steps prior to these examples)

**Choose HL7 v2.6 as the Reference and HL7 v2.1 as the Compared Profile.

By default, comparisons within Gap Analysis are on all attributes. Depending on your project and/or your context, you might need to focus on a subset of attributes and remove others. You can refine the comparison algorithm to narrow your comparison as follows.

The comparison is updated using the active attributes. Once in the Gap Analysis Workbench, you can refine the criteria used to evaluate gaps.

Each HL7 message element is described by a set of attributes. This list maps attributes per each message element.

| Trigger Event | Segment | Field | Table | |

| Event | ||||

| Name |  | |||

| Sequence | ||||

| Optionality | ||||

| Repetition | ||||

| Length | ||||

| Data Type | ||||

| Table Id | ||||

| Label | ||||

| Comments |

Refer to the Extra Content and Gap Analysis section for details around extra content and gap analysis.

Several options are available in the Gap Analysis window.

Here is a list of basic options:

| Hide Unused Columns: | If enabled, this option will hide columns referring to non-computed attributes. Example: If you don’t want to compare the length of fields, the column LENGTH in the Field section will be hidden from your gap analysis results |

| Ignore Case: | If enabled, this option will compare strings using a non-sensitive case algorithm. |

| Use Fuzzy Matching: | If enabled, this option will match names, which are similar to each other. Ex: “Admit a patient” and “Admit Patient” will be considered as equivalent. |

| Use Strict Usage Comparison: | If enabled, this option will consider each segment’s/field’s optionality as different. Otherwise, segments/fields that are not “Required” will be considered as “Optional”. |

Here is a list of more complex options that allow you to maximize usage of Gap Analysis:

You can include Extra Content in the Gap Analysis process under the following conditions:

Once these two conditions are met, the Extra Content elements are managed the exact same way as the other elements. Gaps in the Extra Content elements will also be displayed.

You may want to save the current state of the gap analysis workbench to continue work later. To do so:

An .cxg file describing the current state of the gap analysis workbench is created. You can then reopen it:

Differences Filters are used to show differences that match some specific criteria. In other words, to discard the differences which doesn’t match these criteria.

This can be used, for instance, to show only differences where the Field is Required in the Receiving Application but Optional (or Missing) in the Sending Application.

If a section contains active filters, the filter button will be shown as a full filter ![]() .

.

| Source: | Select the side from which you want to perform a filter. |

| Column: | Select the column from which you want to get the value to be compared. |

| Is/Is Not: | Include/Exclude differences that match the filter. |

| Operator: | Select the operator that you want the criteria and the column’s value to match. |

| Criteria: | Enter the criteria that you want to compare with the column’s value. |

| Checkbox: | Activate or deactivate filter (toggle on or off). |

| And/Or: | AND: applies both these filters. OR: applies either of these filters. |

| Parentheses: | Used for nested filters. |

| = | Covers values with an exact match to this data (this is like putting quotation marks around a search engine query) |

| > | Greater than. Covers filtering on numeric values. |

| >= | Greater than or equal to. Covers filtering on numeric values. |

| < | Less than. Covers filtering on numeric values. |

| <= | Less than or equal to. Covers filtering on numeric values. |

| containing | Covers messages that include this value. |

| present | Looks for the presence of a particular column. |

| empty | Looks for an unpopulated column. |

| matching regex | Use .NET regular expression syntax to build filters. For advanced users with programming backgrounds. Learn more about regular expressions here:

|

| in | Builds a filter on multiple data values rather than just one value. |

| = Other Specification Value | Exact match to the other profile’s column value. |

| > Other Specification Value | Greater than the other profile’s column value. Covers filtering on numeric values. |

| >= Other Specification Value | Greater than or equal to the other profile’s column value. Covers filtering on numeric values. |

| < Other Specification Value | Less than the other profile’s column value. Covers filtering on numeric values. |

| <= Other Specification Value | Less than or equal to the other profile’s column value. Covers filtering on numeric values. |

While editing your filters, you can switch between Basic and Advanced Mode. Advanced Mode shows advanced settings for your filters. These settings help in the construction of more complex filters using AND/OR operators and parentheses for nesting. Otherwise, each filter will be applied one after the other.

If your filters contain advanced settings and you switch back to the Basic Mode, these settings will be lost.

Differences Filters Template are re-usable filters that can be applied to many Gap Analysis. A built-in template can be selected from the drop-down list at the top-left of the filters dialog.

You can hide a difference (Gap Analysis Result row) automatically. To do so, right-click the row you want to hide, then click “Hide [row key] difference”. This adds a new difference filter entry and hide the selected row.

Gaps serve as a to-do list of items you need to handle when configuring the interface. The list of gaps serves as a starting point for project task list documentation. To export gaps as a Excel document:

Microsoft Excel (or the program associated with .xlsx documents) will automatically start.

Message comparison helps you compare 2 sets of messages at the data level. This is useful in several cases, such as:

To compare a set of HL7 messages:

- Go to GAP ANALYSIS, Message Comparison…

- Click the Select messages to compare… zone

- Add the messages you want to compare. Messages can come from:

File: Click Add… to add one or several files containing messages

Database: Select a database to query and from which to retrieve messages.

Integration Engine: Select an integration engine data depot (Ensemble, Rhapsody, Iguana, Mirth and others) to retrieve messages directly from the integration engine (connector required).- Do the same for the other message set, clicking the other Select messages to compare… zone on the right.

Once the comparison is complete, differences are highlighted in red and the total number of differences between messages is displayed.

For a more detailed view of a message pair or message differences, double-click the message pair you want to compare. Navigate through the tree view, field by field, to see the differences.

Click on the gray zone at the bottom of the screen to view more details about each difference. Double-clicking on a grid row helps you navigate through the differences.

By default, messages will be compared based on their position. The first message on the left is compared with the first message on the right, the second with the second and so on.

Since message files don’t always contain the same amount of messages and/or messages are not necessarily always sorted in the same order, you can configure the application to match messages based on field values. To configure the message matching criteria:

Alternatively, you can:

You may want to exclude fields from the comparison so they are simply not considered in the comparison. This allows you to ignore differences in fields you don’t need to consider.

To exclude fields from comparison:

Alternatively, you can:

It can be easier to provide a list of fields to include instead of excluding a large number of fields. The procedure is similar. In the Filter tab, be sure Include (instead of Exclude) is selected.

To set a large number of fields in one operation, use the 1-on-1 message comparison screen. For example, if you want to compare fields PID.2 to PID.13:

The comparison will refresh using the new field set.

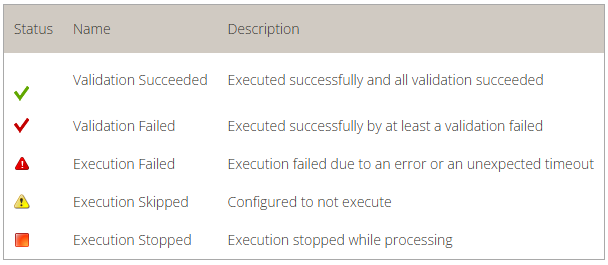

After the comparison is completed, message pairs can have one of the following statuses:

On the bottom left of the screen, the message pair count for each status is listed.

Message pairs can be shown/hidden based on their status. For instance, to hide identical messages:

Identical messages are filtered so only changed and unmatched messages are listed.

An Excel or PDF report can be generated to document the status of all messages. This report can be used, for instance, to document that the transformation code met all requirements at some point in time.

To generate this report:

The report contains:

| Automatically apply changes | If checked, the differences will be calculated each time a significant setting has changed. |

| Treat missing and empty fields as equivalent | If checked, the algorithm will consider missing and empty fields as equivalent. Ex: ‘OBX||AD|||||’ and ‘OBX||AD’ will not be flagged as different. ‘PID|||||Smith^John^’ and ‘PID|||||Smith^John’ will not be flagged as different. |

Caristix Workgroup comes with several features that help you with HL7 messaging:

Caristix Workgroup helps interface analysts and engineers to accurately de-identify HL7 data, covering all 18 HIPAA identifiers. Data can then be safely shared for such purposes as porting realistic data to a test system or staging area, providing realistic sample HL7 messsages for interface scoping, and providing data for clinical and financial analytics.

The following features and functionality are included:

One of the most important issues in healthcare IT is the protection of patient data. Regulation addresses patient privacy and the use of health information in many countries. In the US, HIPAA regulates the use of PHI (protected health information).

While protecting patient data, HL7 analysts need to share or redistribute HL7 production data for such purposes as porting realistic data to a test system or staging area, providing realistic sample HL7 messsages for interface scoping, and providing data for clinical and financial analytics.

The Department of Health and Human Services (HHS) provides a HIPAA Privacy Rule booklet (PDF) that highlights the 18 criteria that can be used to identify patients. All 18 identifiers are categories of data that must be protected. Besides easily recognized personal information, care must be given to protect device identifiers and even IP addresses. De-identification techniques must cover all 18 identifiers.

This term refers to removing or masking protected information. The de-identification removes identifiers from a data set so that information can no longer be linked to a specific individual. In terms of health care information, all identifiers are removed from the information set including both personally identifiable information (PII) and protected health information (PHI).

As a subset of de-identification, pseudonymization replaces data elements with new identifiers. After that substitution, the initial subject cannot be associated with the data set. In terms of health care information, patient information can be pseudonymized by replacing patient-identifying data with completely unrelated data resulting in a new patient profile. The data appears complete and the data context is preserved while patient information is completely protected

A pseudonymized data set can be restored to its original state through re-identification. In re-identifying data, a reverse mapping structure (constructed as the data was pseudonymized) is applied. As an example, a pseudonmymized data set could be sent for processing to an external system. Once that processed information is returned, the data could be re-identified and pushed to the correct patient file.

Identifiers are data elements that can directly identify individuals.This includes name, email address, telephone address, home address, social security number, medical card number, among others. Two identifiers may be needed to identify a unique individual.

Data elements of this type do not directly identify an individual but may provide enough information to narrow the potential of identifying a specific individual. Genders, date of birth and zip/postal code have been studied extensively in this context. There is a dependent relationship between quasi-identifiers and the type of data set of which they are a part. As an example, if all members of a data set are male, gender cannot be a meaningful quasi-identifier. In addition, quasi-identifiers are categorical in nature with a finite set of discrete values. It’s relatively easy to search for individuals using quasi-identifiers.

Non-identifiers may contain an individual’s personal information but aren’t helpful in reconstructing the initial information. For example, an indicator of an allergy to pollen would be a non-identifying data element. The incidence of such an allergy is extremely high in the general population. Therefore this factor is not a good discriminator among individuals. Again, non-identifiers are dependent on data sets. In the right context, they may be used to identify an individual.

De-identification in Workgroup works as follows:

Load the HL7 message that requires de-identification:

The log is loaded in the Messages tab. The tab also indicates the number of messages in the viewing pane and the total number of messages in the file you loaded. The Original pane displays the log you loaded while the De-identified pane displays the de-identified log. The split screens scroll synchronously so that the data displayed is mirrored in the original and de-identified logs.

Resize vertically to change the quantity of data displayed in the viewing pane. Place the pointer on the line dividing the two panes and drag the window to increase or decrease its size. Click the Hide and Show buttons to hide or view panes as needed.

The fields and data types set for de-identification are highlighted in red for easy visibility.

On the left side of the screen are the de-identification settings listed under the Fields and Data Types tabs. Workgroup loads settings to cover the 18 HIPAA identifiers by default.

To add a de-identification rule under Fields or Data Types:

To remove a setting, click the trashcan at the end of the line.

Once you have created and configured all the selectors applicable to the HL7 log to be de-identified, click View Example at the bottom of the left hand panes. A preview of the de-identified log file will appear. Scroll through the log in the viewing pane to verify the potential results of the de-identification process.

Once reviewed and after applying any changes:

Once saved, a De-identification Process Report dialogue box will open asking if you wish to create a de-identification process report. Click Yes or No. If Yes is clicked, you will be prompted to choose a location to save the generated PDF and to give a name to the file. Click Save and the file will be saved to the specified location. The PDF of the De-identification Process Summary will open on your desktop for review. You can also save the file on your local computer by using Browse My Computer.

Once a set of selectors have been chosen for the de-identification of a log file, that set can be saved for reuse.

Once a log file has been opened, the saved de-identification rules can be applied by clicking Open, De-Id Rules from the drop down menu bar under File in the the top menu bar.

Generators refer to the data sources used to set de-identification values in Workgroup.

| Generator | Recommended Use |

| String | Insert a randomly generated string or static value. You can set the length and other parameters. |

| Boolean | Insert a Boolean value (true or false). |

| Numeric | Insert a randomly generated number. You can set the length, decimals and other parameters. |

| Date Time | Insert a randomly generated date-time value. You can set the range, time unit, format, and other parameters. |

| Table | Pull data from HL7-related tables stored in one of your profiles, useful for coded fields. |

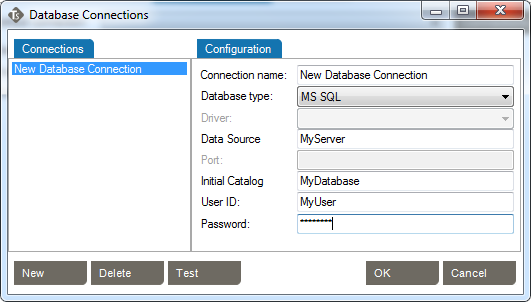

| SQL Query | Pull data from a database based on an SQL query. You’ll be able to configure a database connection. |

| Text | Pull random de-identification data from a text file — for instance, a list of names. Several file formats can be used: txt, csv, etc |

| Excel | Pull random de-identification data from an Excel 2007 or later spreadsheet — for instance, a list of names, addresses, and cities. |

| Use Original Value | Keep the field as-is. No de-identification rules will be applied. |

| Copy Another Field | Copy the contents of another field. |

| Unstructured Data | Find and replace sensitive data in free text fields — for instance, find and replace a patient’s last name in physician notes. |

Each generator has its own settings, which you can edit from the Value Generator tab. Click on the generator name to navigate to the setting details.

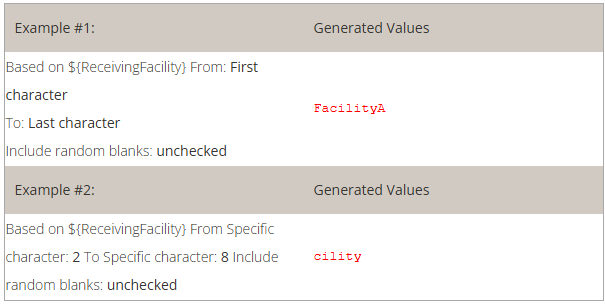

Allows you to use more than one generator for a single field, edit the output format or preformat values. You can also set preconditions to conditionally apply the de-identification rule.

(Only available in Advanced Mode)

Use this to format the original value before it is processed.

This is useful for generators that include the original value or ID fields. Here are two usage examples:

a) In an unstructured data field, you may wish to remove a value that is not contained elsewhere (not already cloaked in another field):

If you know the field may contain a reference to an ID defined as ‘ID-999999’, you would:

1. Cloak the field using an Unstructured Data generator.

2. Set the following preformat for the unstructured data:

Find what: | ID-\d+ | (Search for a text, anywhere in the field value, starting with ‘ID-‘ and followed by one or more numbers.) |

Replace by: | ID-XXXX | (We set a static text to hide the ID but still keep the context of the text.) |

b) If you have the same patient ID number in two systems, but formatted differently, you could format them so that both systems change to the same ID format and can both be recognized as the same patient. Having the same ID will provide continuity of the message flow for a patient (messages will be cloaked using the same fake data):

If, for example, PID.2 is defined like this for the two systems:

First system: ID:123456

Second system: 123-456

You would need to:

a) Set the field PID.2 as an ID (by checking the ID column).

b) Define two preformats like this:

Find what: | ^ID-(?<ID_Number>\d+)$ | (We find an exact match for the format and set the numbers only in a group variable named ‘ID_Number’) |

Replace by: | ${ID_Number} | (We set only the number, removing the superfluous text) |

Find what: | ^(?<ID_Number_Part_1>\d+)-(?<ID_Number_Part_2>\d+)$ | (Find an exact match for the format and set the numbers only in a group variable named ‘ID_Number’) |

Replace by: | ${ID_Number_Part_1}${ID_Number_Part_2} | (Only the number, remove the superfluous text) |

Now both systems will treat PID.2 as being ‘123456’ and match and cloak the messages properly as being the same patient.

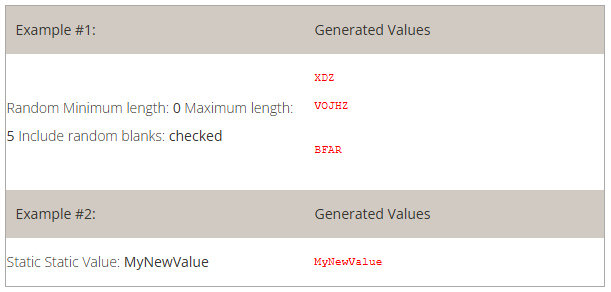

This generator creates a uppercase character string to be used to set a static value.

How to use the “String” generator to create random value:

How to use the “String” generator to set a static value:

How to use the “String” generator to set a Lorem Ipsum text:

| Example #1: | Generated Values | ||||

|

| ||||

| Example #2: | Generated Values | ||||

|

|

This generator creates a Boolean (True or False) value.

How to use the Boolean generator:

| Example #1: | Generated Values | |||||

|

|

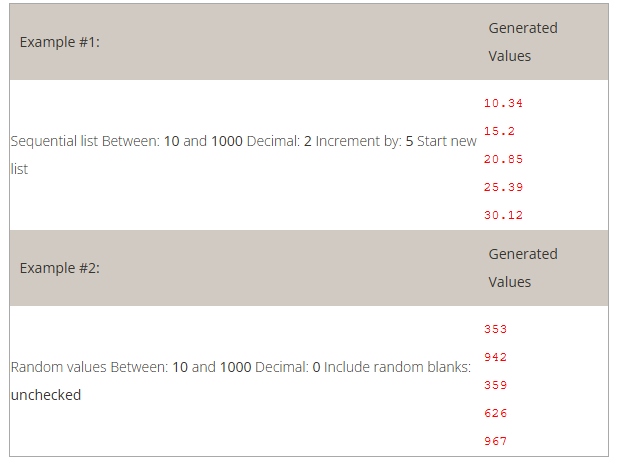

This generator creates a number.

How to use the “Numeric” generator:

| Example #1: | Generated Values | |||||

|

| |||||

| Example #2: | Generated Values | |||||

|

|

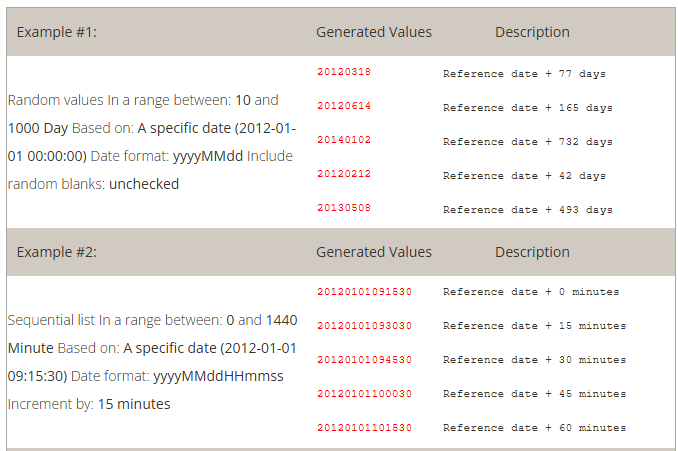

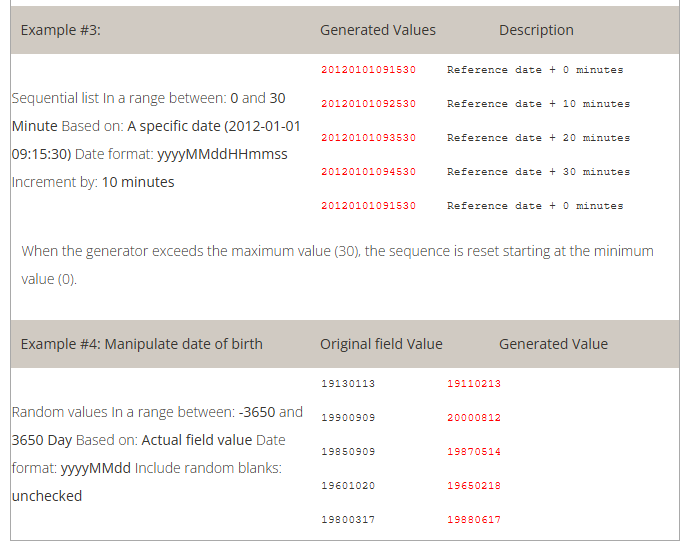

This generator creates date and time values.

How to use the “Date time” generator:

| Example #1: | Generated Values | Description | ||||||||||

|

| |||||||||||

| Example #2: | Generated Values | Description | ||||||||||

|

| |||||||||||

| Example #3: | Generated Values | Description | ||||||||||

|

| |||||||||||

When the generator exceeds the maximum value (30), the sequence is reset starting at the minimum value (0). | ||||||||||||

| Example #4: Manipulate date of birth | Original field Value | Generated Value | ||||||||||

|

| |||||||||||

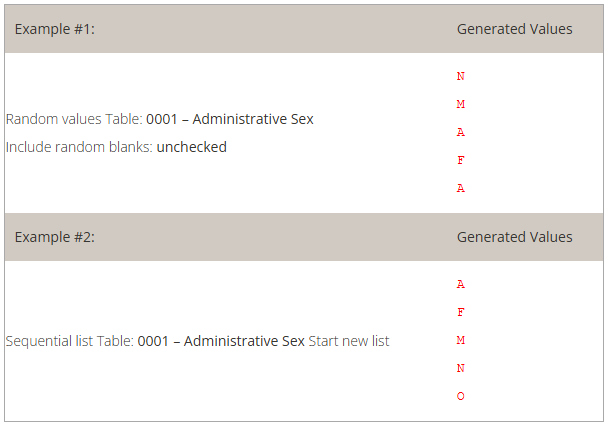

This generator pulls data from HL7-related tables stored in a profile. Read how to set the profile.

How to configure the generator to use the appropriate HL7 table:

| Example #1: | Generated Values | |||||

|

| |||||

| Example #2: | Generated Values | |||||

|

|

This generator pulls data from an SQL-accessible database.

How to configure this generator to use SQL query results as de-identified values:

| Example #1: | Generated Values | |||||

|

|

This generator pulls data from a text file (*.txt, *.csv, etc).

How to configure this generator to use text file content:

Note: If more than one field is configured using the same text file, the same line will be used within the same message. In other words, you can use a text file to ensure several values will be used together. This can be useful when linking a a city with a zip code or a first name with a gender.

The examples below use the following content in a file C:MyDocumentsmyFile.txt

| Example #1: | Generated Values | ||||||

|

| ||||||

| Example #2: | Generated Values | ||||||

|

|

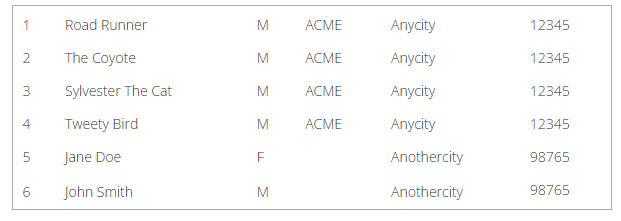

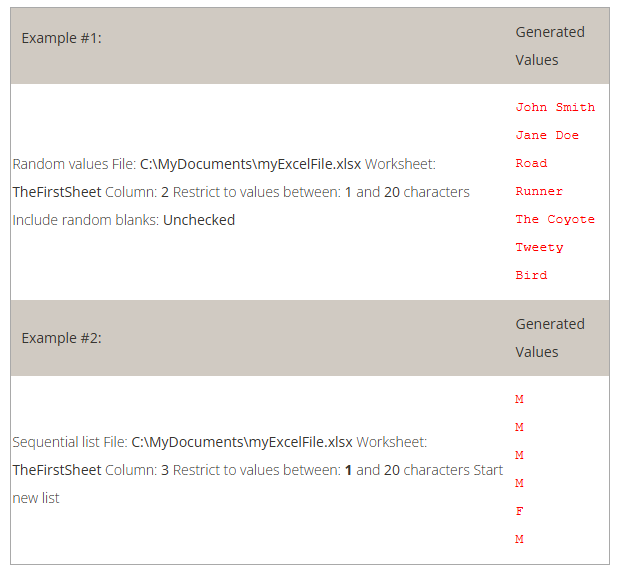

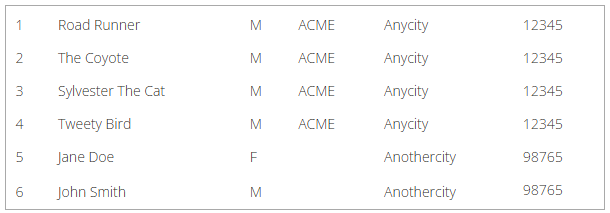

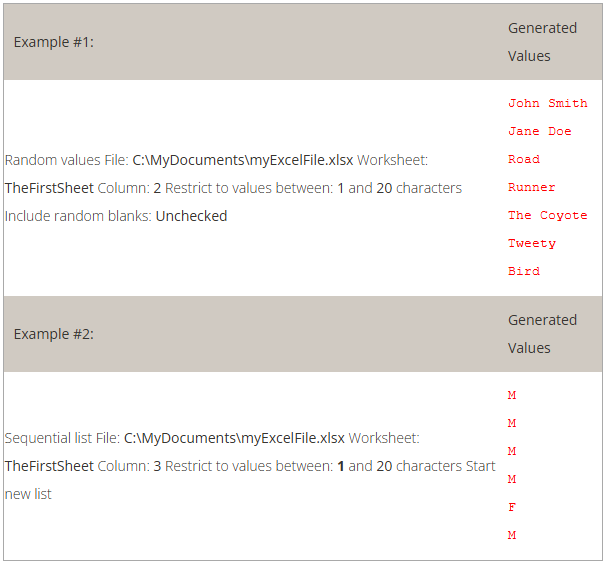

This generator pulls data from an Excel 2007+ file (*.xlsx).

How to configure the generator to use Excel file content:

Note: If more than one field is configured using the same worksheet, the same row will be applied across a message. In other words, you can use an Excel file to ensure that several values will be used together. This can be useful when link a city with a zip code or a first name with a gender.

The examples below use the following content from a file named C:MyDocumentsmyExcelFile.xlsx

| 1 | Road Runner | M | ACME | Anycity | 12345 |

| 2 | The Coyote | M | ACME | Anycity | 12345 |

| 3 | Sylvester The Cat | M | ACME | Anycity | 12345 |

| 4 | Tweety Bird | M | ACME | Anycity | 12345 |

| 5 | Jane Doe | F | Anothercity | 98765 | |

| 6 | John Smith | M | Anothercity | 98765 |

| Example #1: | Generated Values | ||||||

|

| ||||||

| Example #2: | Generated Values | ||||||

|

|

This generator is to be used when you don’t want a data element to be changed. Here

are two use case examples.

If the data type Extended Person Name (XPN) is part of the list of data

types to de-identify, you might need to preserve some of the fields using this data

type.

| Data Type | Component | Generator |

| XPN | 2 – Given Name | Excel File |

| FN | 1 – Surname | Excel File |

| Segment | Field | Component | Subcomponent | ID | Generator |

| PV1 | 7 – Attending Doctor | Use Original Value |

Using this configuration, you would make sure all names are de-identified except

the attending doctor’s name.

Prevent de-identifying a field that is defined as a ID |

| Field IDs must have a generator associated with them but, if for some reason you prefer having the original value, you can set this to avoid any changes in that value. |

Re-use the original data and combine it with other generators |

| In Advanced Mode, you can de-identify the original value by specifying several generators, but you could also include the original value to combine it with other generated values. |

This generator replicates the value from another de-identified field.

How to use the “Copy Another Field” generator:

Example 1: copy the replacement MRN value from PID. 2 to ZCA.3

Sensitive data can be found in unstructured data (free text) such as clinician notes or other narrative text. Most of the data within an unstructured field is not sensitive, but there are times when it might contain data elements you want to protect.

This generator will replace any piece of information found in another message field that is set for de-identification.

In the following message, the name of the patient is mentioned in the patient update note (NTE.3).

If the patient name (PID.5.1 field) is listed among the de-identification rules, you can configure a new field to detect the patient name within NTE.3

| Segment | Field | Component | Subcomponent | ID | Generator |

| PID | 5 – Patient Name | 1 – Family Name | Excel File | ||

| NTE | 3 – Comment | Unstructured Data |

Using these settings, the de-identified message will look like this:

If the patient name (PID.2 field) is listed among the de-identification rules, you can configure a new field to detect the patient ID within NTE.3

| Segment | Field | Component | Subcomponent | ID | Generator |

| PID | 2 – Patient ID | Numeric | |||

| PID | 5 – Patient Name | 1 – Family Name | Excel File | ||

| NTE | 3 – Comment | Unstructured Data |

Using these settings, the de-identified message will look like this:

At the end of the de-identification process, Workgroup offers the option of generating a De-identification Process Report that summarizes the de-identification process. This report can be viewed and shared. The PDF opens automatically upon completion. For later review, navigate to the specified folder when the PDF was stored and click on the file to open it.

The De-identification Process Report has two parts:

This section of the report lists the following:

Files sub-section:

This section identifies the de-identification file name and location and presents tables of three summaries of the de-identification process:

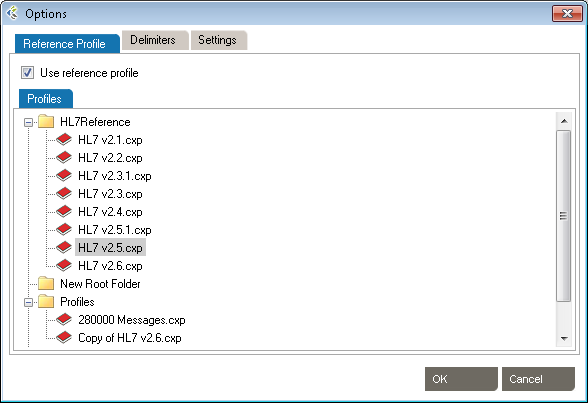

De-Identification has a number of options that can be set. From the main menu bar, click Tools, then Options. In the Options dialog box that opens, there are three categories: Reference Profile, Windows Service Settings, Delimiters and Settings.

These setting allow the use of HL7 reference profiles to parse logs. Open the Reference Profile tab.

These settings allow the addition of specific delimiters to the log file to assist with manageability and readability. They include:

Click OK to save the delimiters.

Click OK to save the settings.

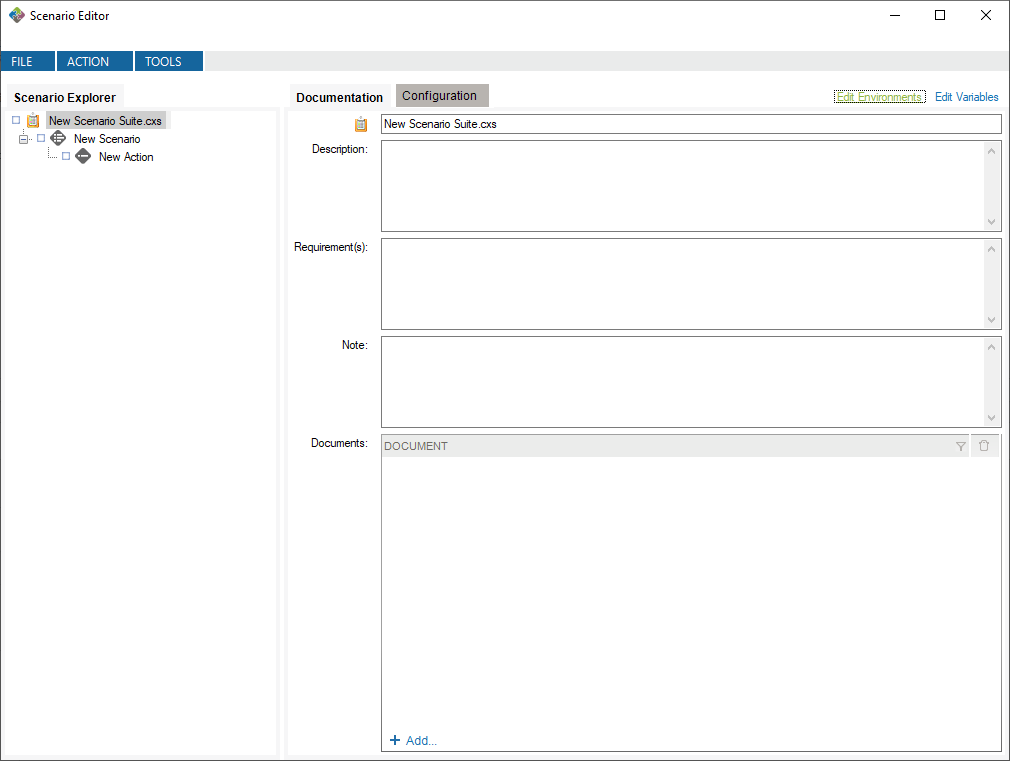

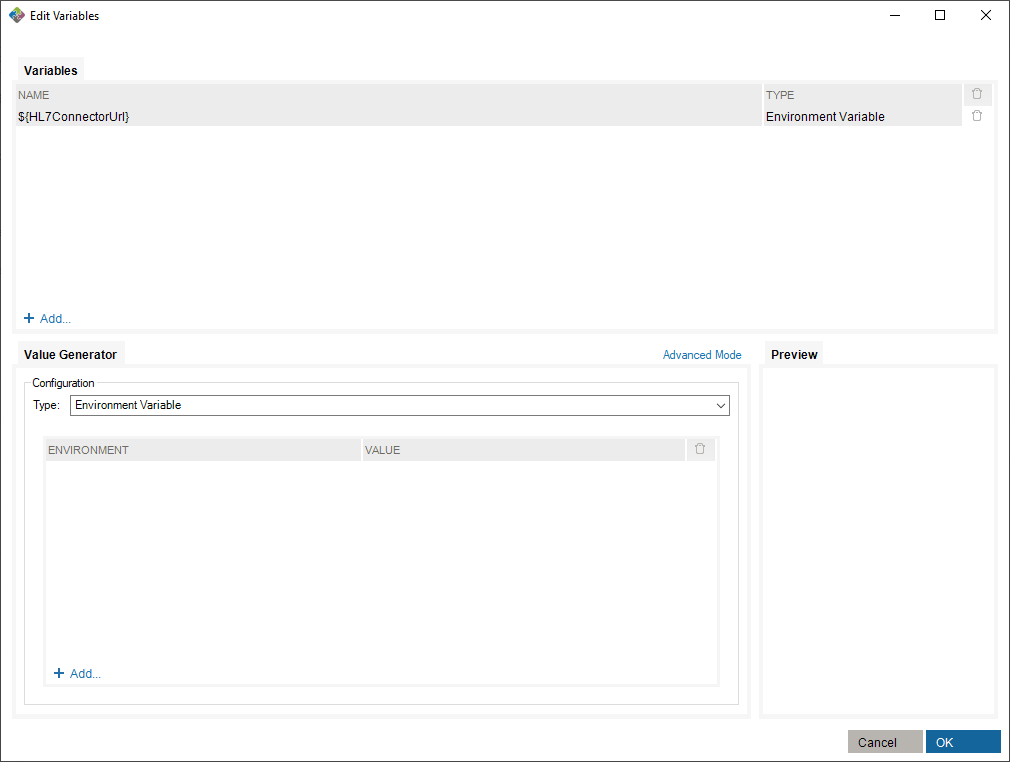

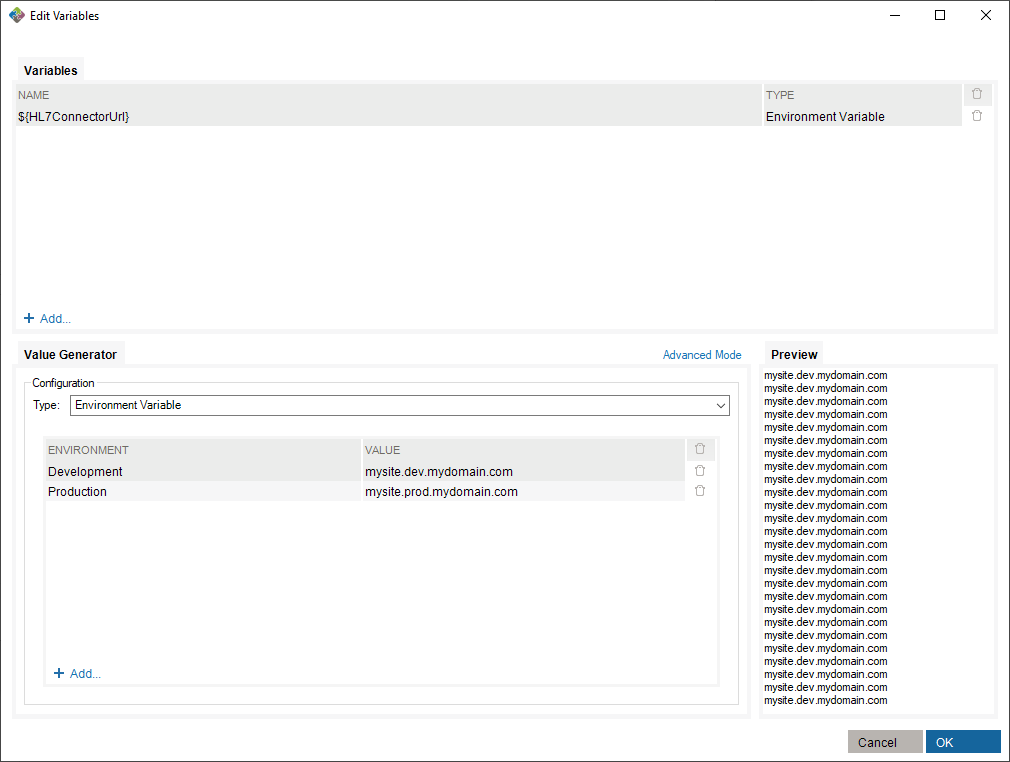

Use the Message Maker tool to create test messages to PLACE INTO a scenario or to copy to another application. The messages you generate will be based on a specific profile (an HL7 version based on the reference standard, or a profile created earlier).

The Message Editor tool lets you edit content and compare HL7 messages against a profile in order to flag conformance gaps. This is useful when you need to troubleshoot data flow in a live interface that has been documented in Caristix Workgroup.

Message Editor in Workgroup works as follow:

The selected HL7 messages will be loaded in the Messages tab.

Using a profile in the message editor will enable the message validation feature. The message validation will compare the HL7 messages against the profile in order to flag conformance gaps. Such gaps could come from:

Click to de-identify current messages. After the de-identification process is complete, the de-identified messages will replace your current loaded messages. Take a look at the De-identification Concepts to understand this process.

When you are analyzing a message log, you sometimes need to quickly capture an overview of a message or segment.

From there you can show/hide:

If you right-click an element in the Messsages Structure/Messages or Validation tab, a contextual menu will open. It contains the available actions for the selected element.

Please refer to the Search and Filter Messages documentation to work with Data Filters and Sort Queries.

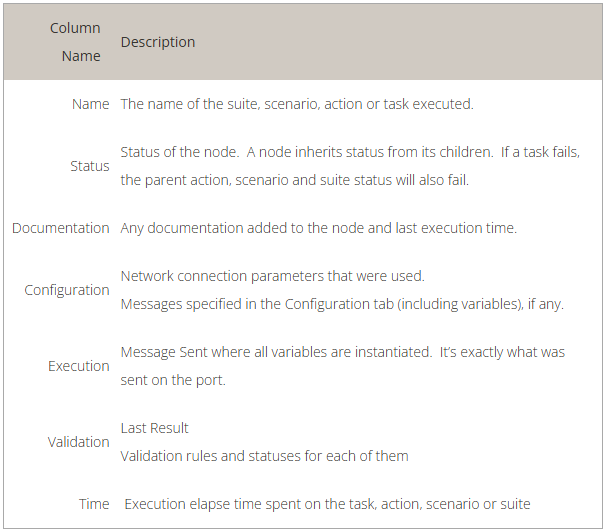

The Message Editor tool lets you compare an HL7 message against a profile in order to flag conformance gaps. This is useful when you need to troubleshoot data flow in a live interface that has been documented in Caristix Workgroup. Validation tab displays conformance gaps flagged by the application.

Caristix Workgroup helps interface analysts, engineers, and technical support team members to quickly find HL7 data needed for interfacing tasks and customer service. It provides the following features and functionality:

You can save your searches and filters as a file. A Search and Filter Rules File is used to persist Data Filters, Sorts Queries and Data Distribution entries for reuse.

* You can also open a Search and Filter Rules file by right-clicking anywhere in the Data Filters, Sorts or Data Distributions section and click the “Open Search and Filter Rules…” menu.

* You can also save a Search and Filter Rules file by right-clicking anywhere in the Data Filters, Sorts or Data Distributions section and click the “Save Search and Filter Rules…” menu.

If you’ve already opened a Search and Filter Rules file, it will be added to the recent files in order to be quickly accessible. To open a recently opened file…

Check “Use Large File mode” when loading files above 10MB in size. (This option will deactivate the Sort, Replace and Edit Message features.)

Check “Use Large File mode” when loading files above 10MB in size. (This option will deactivate the Sort, Replace and Edit Message features.)

Data filters let you set up queries to find messages containing specific data such as patient IDs, names, and order types codes. Queries can filtered on specific message elements: segments, fields, components, and sub-components.

This is the recommended method for building data filters. Once you’ve built a query, you can then modify the Filter Operators to change your filter criteria.

This is an alternate method for building data filters and is helpful when applying complex filter operators.

You can also add filters from the Message Definition tree:

From the messages area, you can also view and edit the segment/field definition and legal values (if the field is linked to a table).

Data filter queries can be made case-sensitive. This is helpful when you need to identify data that might have been entered in all caps (JOHN SMITH) instead of title case (John Smith).

You can create filters that query the entire log, instead of a single segment or field. Simply omit the segment and field from the filter. The results in the Messages area cover all occurrences of the value you specified in the filter.

While editing your filters, you can switch between Basic and Advanced Mode. Advanced Mode shows you advanced settings for your filters. These settings help you to construct more complex filters using AND/OR operators and parentheses for nesting. Otherwise, each filter will be applied one after the other.

If your filters contains advanced settings and you switch back to the Basic Mode, these settings will be lost.

In this example, we want to create filters to get messages where (MSH.3 = MyApplication) and (PID.2.1 = 54738474) or (PID.18 = P5847373).

These filters will include the following messages:

Data filters let you select a subset of messages from the logs you load in Workgroup. The operators let you build filter queries, ranging from simple to complex. The most basic operator set consists of the us of “is” and “=”.

These are the default operators in the Add Data Filter command, available on the right-click dropdown menu in the Messages area.

The other data filter operators let you build sophisticated filters for analyzing the HL7 data in your log. (Learn how data filters work in the section on Working with Data Filters.)

Sort queries sort a log on a message element (segment, field, component, or subcomponent).

Sorting data is useful when you want to group messages by criteria such as patient name, date, or location.

This sort on MSH 6 reorders messages by the name of the receiving facility, in this case, a patient care location.

This is the recommended method for building sorts. Once you’ve built a query this way, you can modify the Filter Operators to change your filter criteria.

This is an alternate method for building sort queries.

You can also add sort queries from the Message Definition tree. To do so:

The Data Distribution feature displays the data values in a field. For instance, it helps you quickly figure out what codes are used in a specific field or how often a specific code is used.

Data Distribution can also help you analyze how one field can impact other fields in terms of data and content. With Data Distribution, for example, it’s possible to get the list of lab result codes for each lab request codes within a set of sample messages.

All charts and tables can be copied and pasted to Word and Excel.

The pie chart displays the values that populate the field, as well as how often those values occur in the field.

The report displays which Allergen Type Code is sent, grouped by Sending Facilities.

You can also add data distribution fields from the Message Definition tree:

From the Data Distribution table view, you can add a Data Filter in order to find messages containing specific data:

Some interfacing technologies output non-standard message logs. In a raw state, they may be impossible to parse against an HL7-compliant standard. By adding a message prefix representing the extraneous data, you can load these logs in Pinpoint.

To add a message prefix:

You can also use message and segment ending delimiters.

Learn more about regular expressions here:

Workgroup works by parsing messages against a reference profile (or specification). The default setting is to parse against the HL7 version specified in the Version ID field of the MSH segment. However, you can also set the reference profile manually, as follows:

The default profile library is in %AllUsersProfile%\Application Data\Caristix\Common\Library\library.cxl. If you want to load an alternate profile library, click the Browse button.

You can make your data filter queries case-sensitive. This is helpful when you need to identify data that might have been entered in all caps (JOHN SMITH) instead of title case (John Smith).

This option will generate extra metadata information when you save the resulting messages. This metadata contains the filters, sort and file sources information.

By default, Search and Filter Messages will automatically apply changes that you make on filters. If you uncheck this option, changes to filters will only be applied when clicking on the Apply Changes button.

You can find and replace values in your messages. The Use filters option lets you find and replace within a field.

You can also use the Replace tab and specify a replacement value